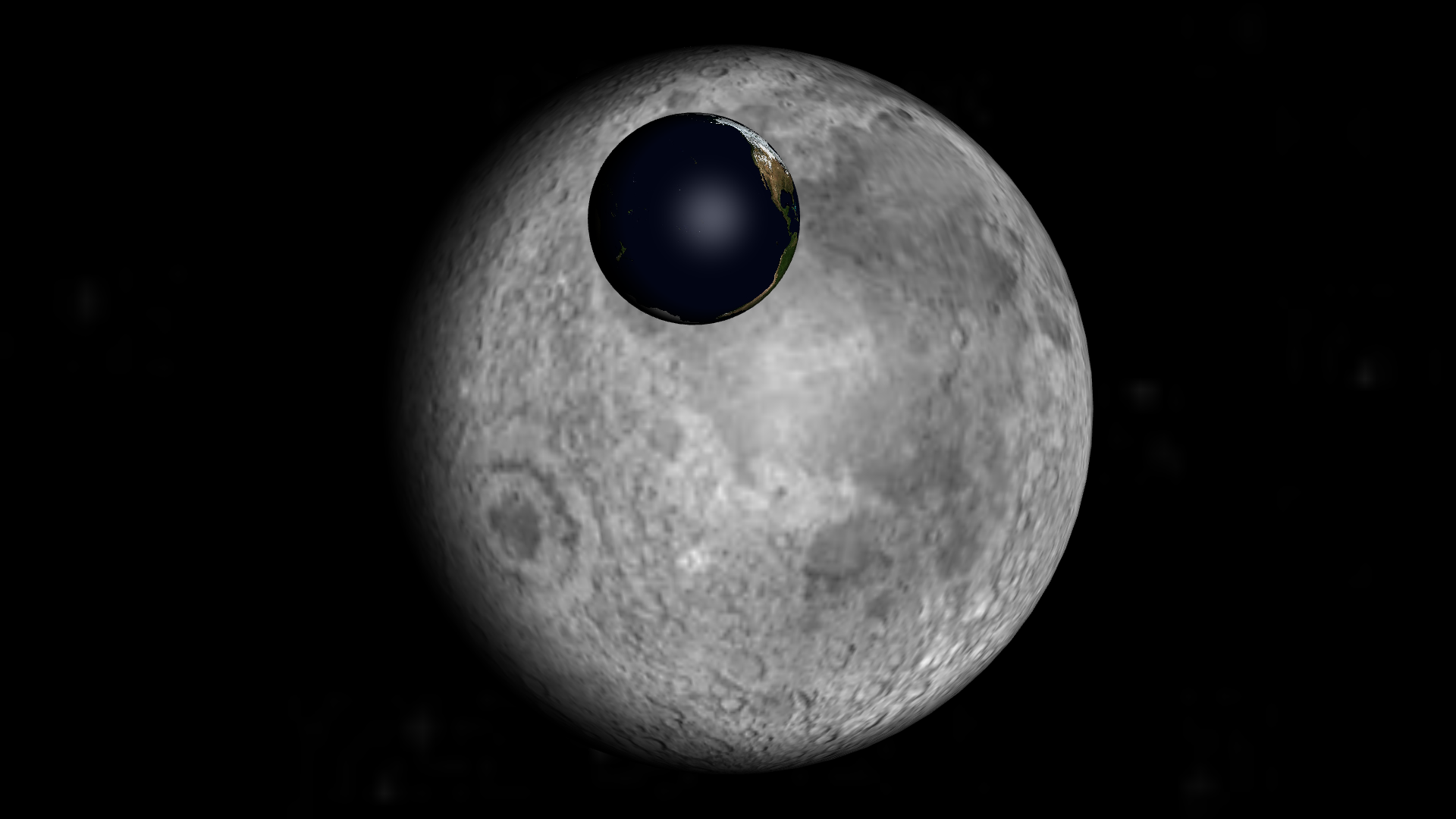

Guess what. We’re going to talk about floating point arithmetic and what happens when using distances that range from the millimeter to Pluto’s distance to the Sun. Yes, yet again.

Floating Point Arithmetic

OpenGL uses single precision floating point arithmetic. In any case, that’s what your GPU prefers to use. This means you can be as precise as 60 parts in a billion, but not more. That’s more than enough for drawing human-sized objects: the error is much smaller than the width of human hair. And it causes no problem when drawing the Solar-system: the error to Pluto’s orbit is 352 km, which is not visible when looking at the whole thing. But you are going to have issues with drawing a human if you do not know if it’s right here, or 352 km away.

We can avoid the main problem by doing most computations in double precision and centering around the camera before switching to simple precision for the rendering, as I briefly touched in a previous article. And that’s fine! We do not need more than single point precision to locate a pixel on the screen after all.

So far so good. But using a wide range of coordinates in the scene surfaces another issue: the naive use of the depth-buffer.

The Depth-Buffer

3D rendering, at its core, is not very complex. You ask to draw some triangles, your GPU does some math to know where it should draw it on your screen and give colors to the pixels that end up in it. And that’s it! Well, almost. There is one tiny remaining problem.

Each pixel might be in the direction of various objects. But, most of the time, it should be of the color of the closest object. If we just draw all the objects overwriting the pixels each time, we might show something which is supposed to be hidden!

The most intuitive way to fix this is to just draw the objects in the appropriate order! This is z-sorting. But it is not a trivial task, and will not work in all cases (when A is partially behind B, B partially behind C and C partially behind A).

The more robust way is to remember for each pixel, how far it comes from. When drawing something over this pixel, we check whether the new value is closer. If it is, we use the new value, otherwise we keep the old one. This is the depth-buffer. For each pixel, we’ll track its current color (red, green, blue and alpha), and its current depth.

Precision Issues

By default, OpenGL actually sets the depth-buffer (d) as the inverse of the distance from camera (z): d = 1 / z. This is a secondary effect of the perspective transform, which makes things look actually 3D.

There are various ways to fix this. The Outerra blog offers two approach for a logarithmic depth buffer:

- explicitly set the depth-buffer from z (or w) in the fragment shader

- override z when it will not affect perspective (which relies on w) and let OpenGL use it to set the depth-buffer

The first method works very well, but disables various optimizations, so it slows down rendering a bit. The second method lets OpenGL do its thing — so it is fast — but it does not work well with large triangles close to the camera.

For more information, you can also have a look at another article from the Outerra blog.

Writing this article has forced me to better understand the technique, and to fix a bug due to using the second method incorrectly (I had kept the multiplication by w from an earlier method).

With this out of the way, you can draw things very close-by or extremely far away in a single scene! No need for hacks such as splitting the scene in a close-up part and a far-away part!

Leave a Reply